OpenAI announced the discontinuation of the Sora web and app experiences on April 26, with the Sora API following on September 24. The first deprecation triggers in two weeks. Enterprises that built workflows on Sora since launch are not facing a model upgrade — they are facing a workflow rebuild on a four-month timeline. This is the first prominent enterprise-facing AI deprecation event of the cycle, and the precedent it sets matters more than the specific product involved.

Model deprecation is no longer a developer-tier concern. It is an enterprise governance question that deserves a place on the risk committee agenda. The real shift is happening here: AI dependency without continuity is becoming a board-level risk in 2026.

The shift: dependency without continuity guarantees

The pattern of the past two years has been to build agent workflows on whichever foundation model was demonstrably best at the time, with little contractual commitment from the model provider about how long that model would remain available. Provider terms have improved — Azure OpenAI’s twelve-plus-six-month commitment for generally available models is the strongest standard in market — but most enterprises have not negotiated equivalent terms with their chosen providers. They built on capability, not on continuity.

When the provider sunsets the model, the enterprise’s options are bad. Migrate to a successor model that may behave differently in subtle ways — requiring re-validation of every governed use case. Renegotiate at the eleventh hour for extended access at unfavorable terms. Or absorb the operational disruption of the workflow simply not working until rebuilt.

The Sora event is small in dollar terms but large in precedent. The next deprecation will involve a more enterprise-critical model, and the enterprises that did not see this one coming are not going to see that one coming either.

The role change is the addition of an AI continuity discipline

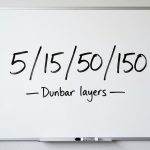

Inside enterprises that take this seriously, a discipline is emerging that did not exist in 2024 — AI continuity management. The work overlaps with vendor management, with disaster recovery, with model risk management, and with regulatory compliance, but it is structurally distinct from all of them. The discipline involves maintaining an inventory of model dependencies by workflow, negotiating continuity commitments at procurement, running successor-model regression tests on a regular cadence, and ensuring that the documentation chain meets the rebuild-readiness standard.

Most enterprises have not staffed this discipline. The accountabilities are scattered across teams that do not coordinate. The procurement team negotiated the model contract a year ago without a continuity clause. The deployment team is building production dependencies on the model without thinking about migration cost. The risk team has not flagged model deprecation as a category. When the deprecation announcement lands, the company finds out it has no plan.

The fix is straightforward in concept and slow in practice. Add continuity commitments to the procurement template. Build a model-dependency inventory. Designate an owner for AI continuity at the executive level. Run quarterly successor-model tests. None of this is hard. It is just unglamorous work that does not get done unless someone owns it.

The strategic consequence is renewed buy-versus-build math

Continuity risk changes the calculus of where to deploy AI capability. For workflows where the cost of unplanned migration is high — regulated workflows, mission-critical operations, customer-facing experiences with high switching costs — the case for either fine-tuning a frontier model into a controlled deployment, partnering with a vendor offering enterprise-grade continuity commitments, or building on open-weight models the enterprise can host indefinitely is stronger than it was in 2024. The case for relying on whichever model is best on a benchmark this quarter is weaker.

The math is not simple. Open-weight models lag the frontier, sometimes meaningfully. Self-hosting carries operational cost that the proprietary providers absorb. The vendor lock-in to a single proprietary provider, even with the best continuity terms, is a different kind of risk than open-weight self-hosting carries. Each enterprise has to make this trade-off based on the workflow’s tolerance for capability lag versus its tolerance for continuity disruption.

What is no longer defensible in 2026 is treating model continuity as someone else’s problem. The Sora sunset is small. The next one will not be.

So what boards should do this quarter

Add model deprecation to the risk committee agenda. The first deprecation event lands in two weeks. The board should at minimum understand which workflows are exposed and what the migration plans are.

Demand a model-dependency inventory. Which workflows depend on which models from which providers, with which contractual continuity commitments. If this inventory does not exist, building it is the priority.

Reconsider the buy-versus-build posture for mission-critical AI workflows. The 2024 default — use whichever proprietary model is best — was rational at the time. In 2026, with the deprecation precedent now visible, that default deserves an explicit reconsideration. Continuity is becoming a form of resilience. The boards that price it in this quarter will not be the ones rebuilding workflows under deadline.

References and links

- OpenAI Help Center — What to know about the Sora discontinuation (web and app sunset April 26, 2026; API sunset September 24, 2026)

- OpenAI Help Center — Sora 1 Sunset FAQ

- OpenAI Developer Docs — Deprecations policy and schedule

- Microsoft Azure OpenAI — Model retirements and deprecation schedule

- InformationWeek — The sunsetting of Sora: A hard lesson in AI portfolio resilience