What was announced

Two announcements in the week of March 2–8, 2026 redrew the agent landscape. Anthropic’s Model Context Protocol crossed 97 million installs, with every major AI provider now shipping MCP-compatible tooling — moving the protocol from experiment to default infrastructure for tool-calling agents. Apple confirmed that the redesigned, AI-powered Siri targeted for release alongside iOS 26.4 will be powered by Google’s Gemini model running on Apple’s Private Cloud Compute. In parallel, Anthropic rolled out memory features to all Claude users and deployed Opus 4.6 as an add-in inside Microsoft PowerPoint and Excel.

What it means

The MCP install count makes the connectivity layer between agents and tools a solved problem at the standards level. That is a meaningful shift. For two years, the friction in shipping agents was that every tool integration was bespoke; the integration debt scaled linearly with the number of tools and the number of agents. With MCP at default-infrastructure scale, the integration cost is closer to fixed than linear, and the bottleneck moves from connectivity to orchestration and governance.

Apple’s decision to rent cognition from Google for Siri is the more strategically loaded story. It signals that even the most vertically integrated consumer-tech company in the world has concluded that building competitive frontier-model capability inside the company is not the right capital allocation. The Private Cloud Compute envelope handles the data-sovereignty argument. The Gemini choice handles the capability argument. The combination is an explicit acknowledgment that frontier-model capability has consolidated at a tier of providers most companies will rent from, not build alongside.

Andreas’s view

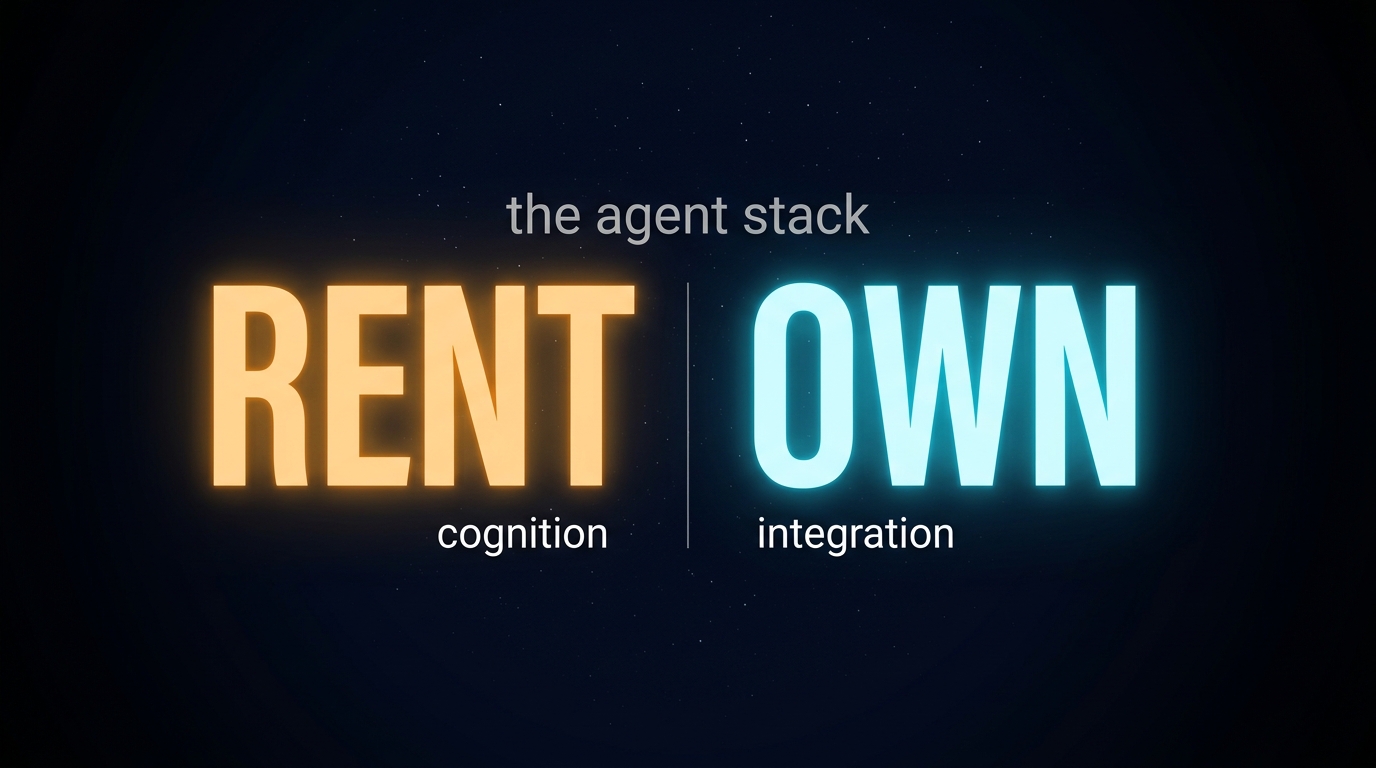

My read on this: the agent stack is settling into a recognizable shape. Standards layer (MCP, becoming generic). Frontier-model layer (a small number of providers — OpenAI, Anthropic, Google, with regional players underneath). Application layer (where most enterprise value is created). The interesting strategic action for the next 24 months is in the application layer, where the questions are which workflows to embed, which data to expose, and which orchestration logic to own.

I don’t think Apple’s choice is anomalous. It is the start of a wave. Companies that have been building internal frontier-model capabilities will increasingly find that the math does not work — the capex is consumer-internet scale, the talent is concentrated at three or four employers, and the capability gap to “good enough internal model” widens every six months. The economically rational answer for almost everyone is: rent the cognition, own the integration and the data envelope around it. Apple has now made that a defensible board-level position.

The way I see it: the most important architectural question right now is whether the cognition layer (rented, frontier-model, expensive but improving exponentially) is clearly distinguished from the integration layer (owned, workflow-specific, where the moat actually lives). Where those layers are blurred, I’d expect companies to find themselves overpaying on one side and under-investing on the other. The Apple-Google deal is the clean reference architecture for how that separation can look.

Three things I’m watching

Three things I’m watching as this plays out:

- I’ll be watching whether companies architect the cognition layer and the integration layer separately — treating frontier-model providers as utilities while building proprietary infrastructure around workflow integration and the data envelope.

- The companies that preserve optionality will be the ones that default to MCP-compatible tooling for new agent integrations. The standards layer is no longer a strategic differentiator — the question is how quickly organizations stop treating it as one.

- I’ll be watching how internal frontier-model build efforts hold up against the Apple-Gemini reference case. Where differentiation rests on owning the model, I’m interested to see whether those bets come with a credible 36-month capex and capability projection — and what happens when they don’t.

References and related signals

- Crescendo AI: latest AI news and developments

- Related signal: Anthropic’s Opus 4.6 PowerPoint and Excel integrations move frontier-model capability deeper into the enterprise default tooling, accelerating the rented-cognition pattern.

- Related signal: NVIDIA GTC 2026 (March) emphasized agentic frameworks and Fortune 500 production deployments — the application layer is where the next wave of enterprise AI value is being created.

- Related signal: 95% of generative AI pilots still fail to reach production. The connectivity layer being solved does not solve the operating-model layer.

- Related signal: Apple choosing Gemini over OpenAI for Siri changes the competitive math for every enterprise still scoping a frontier-model partnership.