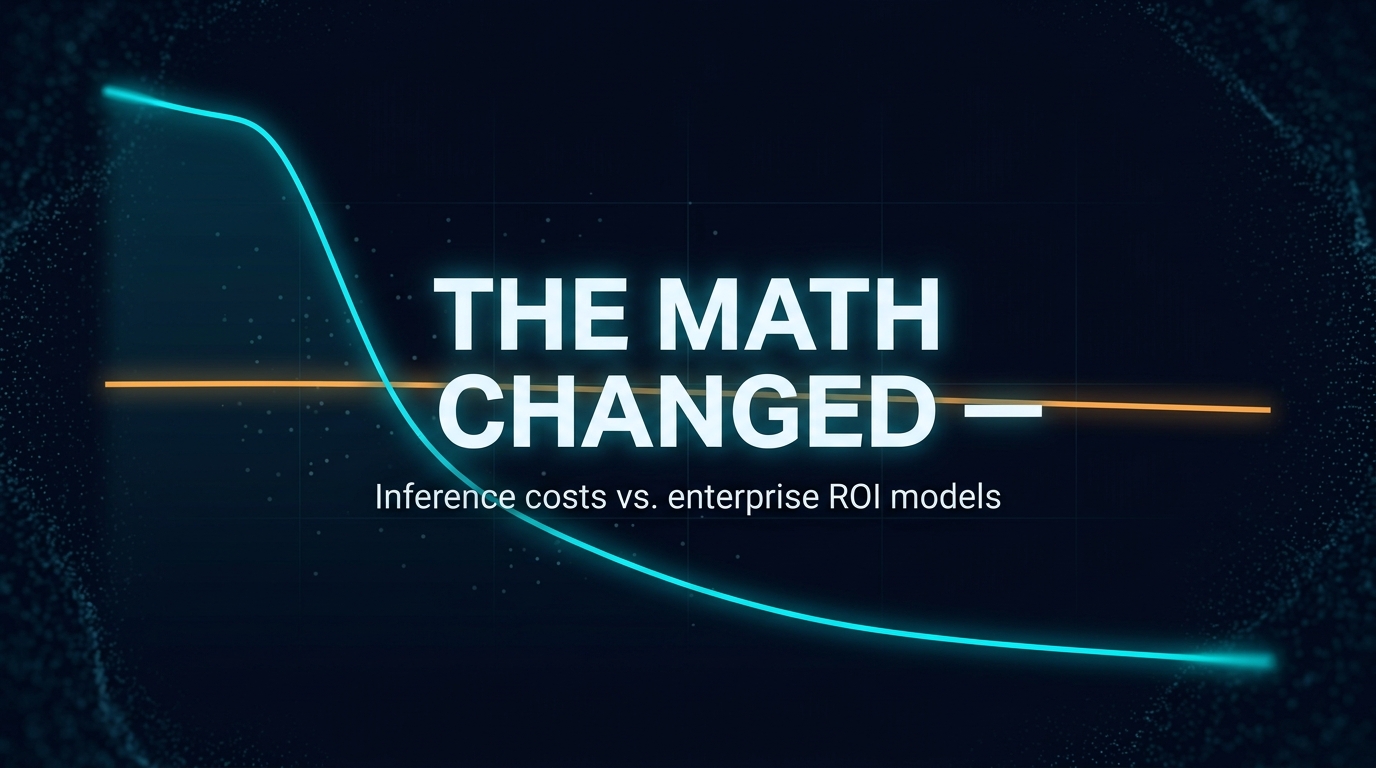

GPT-4 class inference cost $20 per million tokens at launch in early 2023. In April 2026, equivalent performance runs $0.40. Most enterprise AI business cases were built somewhere in the middle — and haven’t been updated since.

That gap is not a technology story. It is an arithmetic problem wearing a strategy hat.

What moved

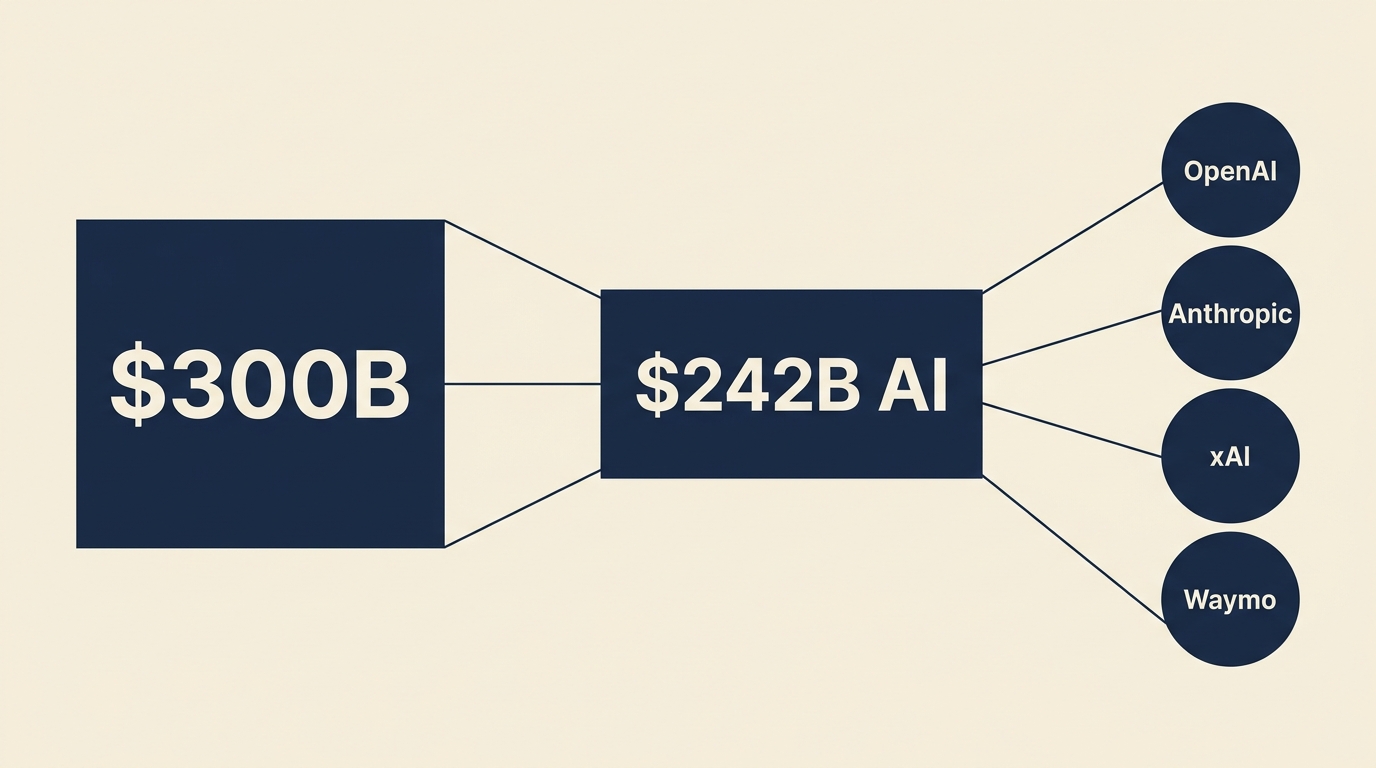

Inference costs have declined faster than the bandwidth price collapse of the early internet era, faster than PC compute, and considerably faster than any enterprise finance model anticipated. Artificial Analysis tracks it live: the cheapest capable models today run under $0.50 per million tokens. A flagship model that cost $10 per million tokens eighteen months ago now costs $2–3. The price range between the cheapest and most expensive capable options has widened past a thousand-to-one.

The driver is compounding. Better training efficiency produced more capable models at lower operating cost. Competition between providers accelerated the pass-through. Specialised chips entered the stack. The result: a cost curve that looks less like traditional software pricing and more like solar panel economics — each year’s curve is below where last year’s curve said it would be.

What did not move

Enterprise AI business cases.

S&P Global found that 42% of companies abandoned most of their AI projects in 2025. Cost and unclear value were the top reasons cited. IBM put the share of AI initiatives delivering expected ROI at 25%. MIT found that 95% of AI pilots delivered zero measurable P&L impact (MIT NANDA, State of AI in Business, 2025).

These numbers are real. But the interpretation of why projects fail is often imprecise.

Projects approved in 2023 and 2024 were scoped against the pricing environment of 2023 and 2024. The cost models that informed the go/no-go decisions used token prices that no longer exist. The ROI denominators were anchored to infrastructure assumptions from a period when GPT-4 access cost $10–20 per million tokens. The business cases that were rejected on cost grounds — the ones that landed below the internal ROI hurdle by a thin margin — were rejected against a cost basis that is now a fraction of what it was.

That is not a technology failure. It is a modeling lag.

Andreas’s view

My read on this: there are two different things getting conflated in the ROI conversation. One is genuinely poor outcomes — wrong use case, shallow integration, insufficient change management. That is real and deserves scrutiny. The other is a systematic understatement of AI’s economic potential because the cost assumptions in the business case never got refreshed. Those two phenomena look identical in the data.

I don’t think the 42% abandonment rate or the 25% ROI hit rate tells us much about what AI can do at today’s prices. It tells us how enterprises perform against business cases built on 2023 assumptions. The projects that got killed for cost reasons in Q4 2024 would look different rerun against Q2 2026 pricing.

My expectation is that the organisations getting ahead of this are running a specific exercise that most are not: taking the cost assumptions out of every AI initiative that was rejected or stalled in 2023–2025, replacing them with current market rates, and seeing which cases cross the ROI threshold now. Not all of them will. But some will — and the decision to revisit them is a spreadsheet exercise, not a technology project.

Three things I’m watching:

- Whether finance teams are treating inference cost as a stable input or a variable. Most enterprise budget models treat infrastructure cost as a constant. Inference cost is not a constant — it has been declining faster than almost any other enterprise input cost in the last three years.

- The spread between unit cost and total spend. Per-token costs have collapsed, but total enterprise AI spend is forecast to jump 65% in 2026 — from roughly $7M average to over $11M (IDC). Volume is expanding faster than unit costs are falling. The budget impact of AI is still growing, even as the underlying unit economics are dramatically more favourable than they were.

- How capital allocation committees handle the remodel request. The institutional question: if a CFO approved a 2023 AI business case that underperformed, how does the organisation handle finance coming back and saying “the cost structure changed — the case should have worked, we just used the wrong numbers”? That conversation is coming.

What this reveals

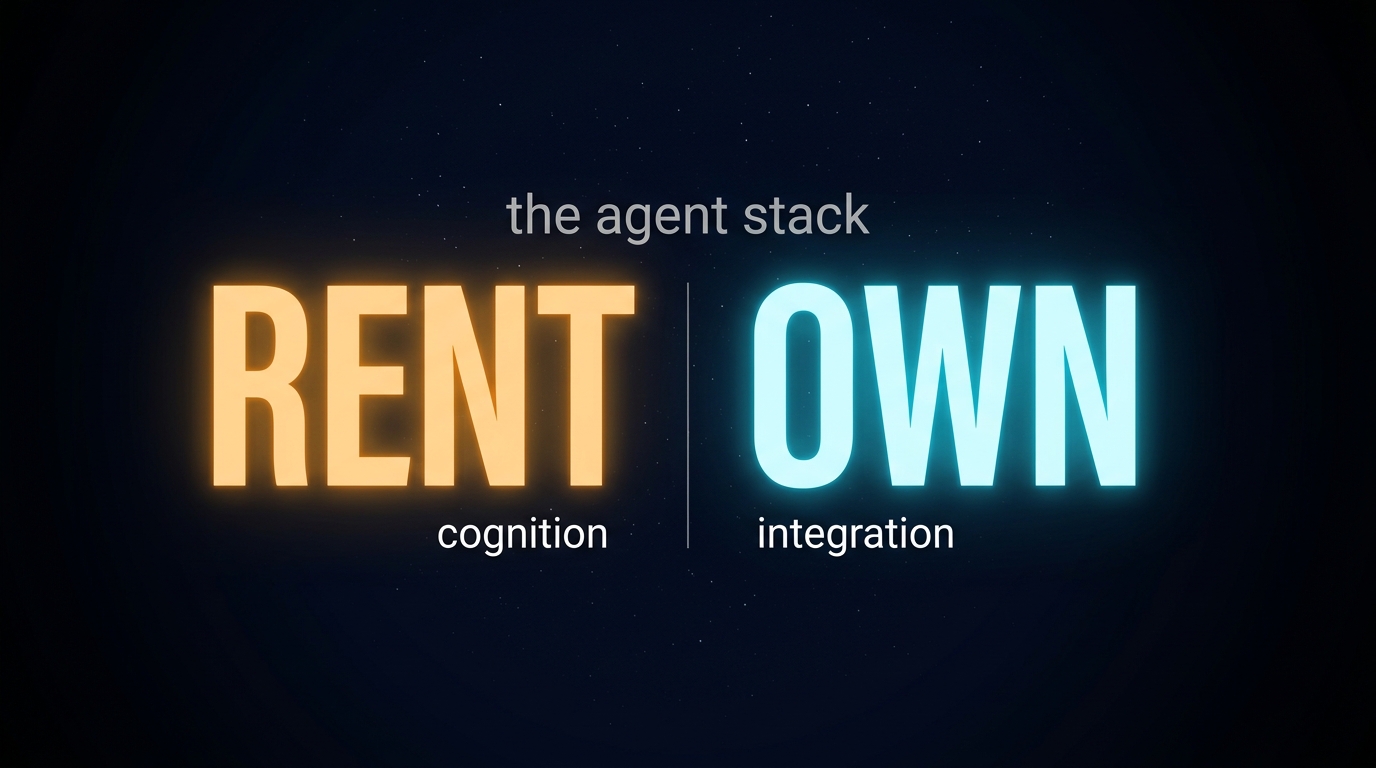

The collapse in inference cost is well-understood in developer circles. Engineers who run inference workloads reset their unit economics continuously — it is operational reality. The delay is in the enterprise business case layer, where cost assumptions travel up through approval chains, get embedded in multi-year plans, and calcify.

The cost curve does not care about the approval cycle. It moved while the slide decks were in review.

This is not an argument that all AI investments look better at current pricing — some of those failed pilots would have failed regardless, and the organisational conditions for AI success (clear scope, embedded workflows, meaningful accountability) have not gotten easier. But a non-trivial fraction of the projects that stalled on cost now live in territory where the math is different. Identifying them is a shorter path to AI ROI than starting new initiatives from scratch.